The facts about Facebook's fact-checking program

Facebook loves to talk about its third-party fact-checking program as proof that it's committed to fighting disinformation. CEO Mark Zuckerberg talks about it. Vice President Nick Clegg talks about it. The program is mentioned on Facebook's corporate blog again and again and again and again and again.

But what is the actual scope and impact of the program? And how much is Facebook really invested in its success?

Facebook has seven active fact-checking partners that focus on content in the United States — Politifact, Factcheck.org, Associated Press, Lead Stories, Agence France-Presse, Science Feedback, and The Daily Caller. Popular Information asked all of them how much content they were able to fact-check on a monthly basis and what resources each publication devoted to the task. All but Science Feedback and The Daily Caller provided responses. In addition, Popular Information reviewed each publication's website, the information each provided to the International Fact-Checking Network, and other publicly available information.

Taken together, this data provides an accounting of Facebook's fact-checking efforts in the United States.

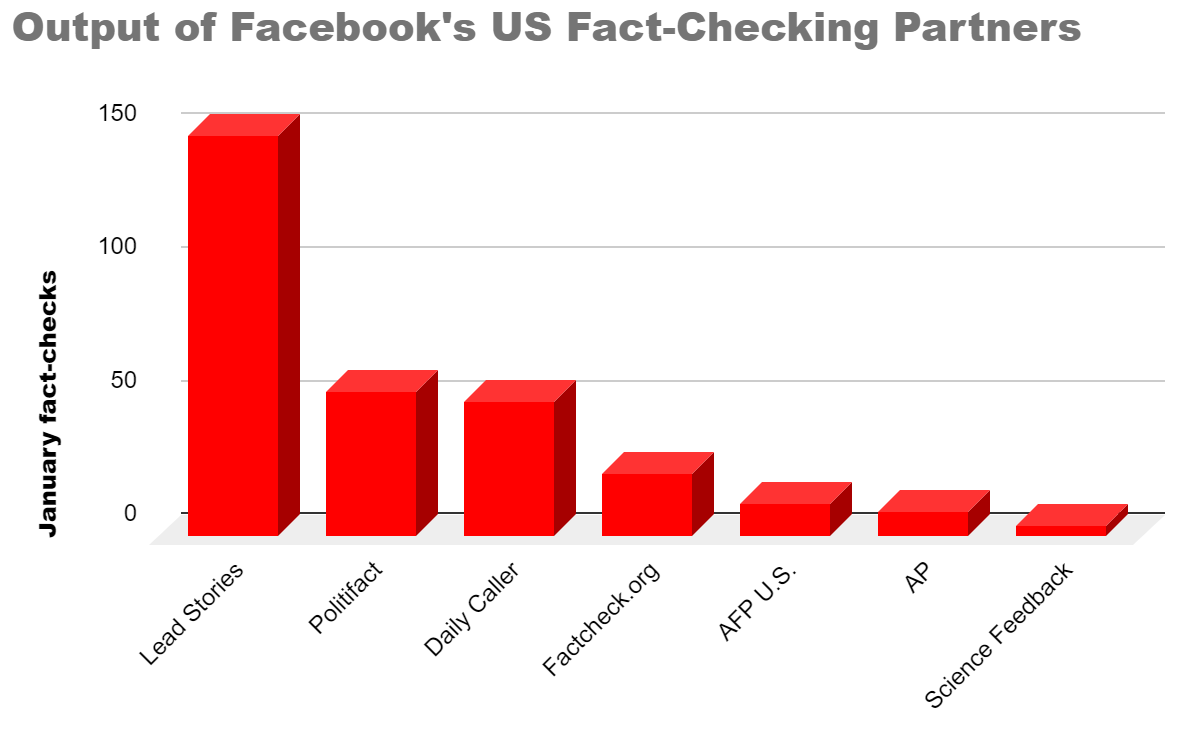

In total, these fact-checkers conducted a total of 302 fact checks of Facebook content in January 2020.

Content that is rated false by Facebook's fact-checking partners is downgraded in the algorithm and appears less frequently on Facebook. Before users share this content, they are given a warning that a fact-checking partner rated it false.

The output of each partner varies substantially. Half of all fact checks were performed by Lead Stories, a small publication that, until last month, had just two employees. Meanwhile, the Associated Press, one of the largest journalism organizations in the United States, performed just a handful of fact checks. Yesterday, Facebook announced that another large wire service, Reuters, will be joining the program.

The overall volume of fact checks provides some perspective. Facebook has more than 200 million users in the United States, posting millions of pieces of content every day. The reality is that almost nothing on Facebook is fact-checked.

Facebook declined to comment on the output of Facebook's third-party fact-checking program in the United States. But, in a statement to Popular Information, Facebook said that the program was effective:

We’re proud that in just three years, we’ve assembled a diverse network of over 50 fact-checking partners in 40 languages around the globe — unmatched on other social platforms — that is reducing misinformation on Facebook.

Emily Bell, director of the Tow Center for Digital Journalism at Columbia University's Graduate School of Journalism, told Popular Information that she found the total number of fact checks "very low" relative to the size of the problem. "Is this a serious thing for them? So far, everything I've seen suggests the answer is no," Bell added.

Lucas Graves, a professor at the University of Wisconsin who wrote a book on political fact-checking, agreed with Bell's overall assessment. "Is it adequate to the task? No, absolutely not," Graves told Popular Information. Graves added that the fact-checking program might have other benefits, like improving Facebook's algorithms.

Baybars Örsek, the director of the International Fact-Checking Network, which certifies Facebook's fact-checking partners, told Popular Information that the "scope of the program definitely has room to grow not only in the U.S. but around the world."

Facebook's tiny investment in U.S. fact-checking

In an earnings call on January 29, Facebook disclosed that it had $71 billion in revenue in 2019. How much of that did it spend on its U.S. fact-checking program? Facebook declined to comment. But, relatively speaking, it's not much.

Two fact-checkers, Lead Stories and Factcheck.org, disclosed their 2019 funding from Facebook. Lead Stories was paid $359,000 by Facebook in 2019, and Factcheck.org was paid about $229,600. Lead Stories and Factcheck.org account for more than half of all the Facebook content review by U.S. fact-checkers in January 2020.

Assuming that Facebook paid about the same amount to its other fact-checkers, even though most were less productive, Facebook's total investment in 2019 would be about $2 million. That's an investment of 0.003% of its 2019 revenue. For perspective, it takes Facebook about 15 minutes to bring in $2 million in revenue. So one of the reasons why very little U.S. content is checked by Facebook is because Facebook spends very little money on the program.

As a result, there are few people who work exclusively on fact-checking Facebook content. Factcheck.org told Popular Information that it has two people engaged full-time in the effort and has recently hired a third to coordinate and edit the activity. Lead Stories, which focuses its work almost exclusively on Facebook, says it had two staffers in 2019 but recently expanded its staff to eight people. But other entities, like the AP and Politifact, have no dedicated staff devoted to Facebook fact-checking. Instead, their staff balances Facebook content with traditional fact-checking activities like scrutinizing claims by politicians.

Örsek noted that Facebook was "already one of the highest investors in fact-checking globally" but acknowledged that "more investment is highly needed to combat the misinformation on the platform."

While Facebook claims its current investment in third-party fact-checking is making an impact, Örsek said Facebook should be "more transparent on the reach of viral misinformation and the impact of fact-checking treatment through making figures publicly available."

Why Facebook outsources fact-checking

Other Facebook policies, like its prohibition on hate speech, are enforced by Facebook itself. Why does the company outsource its fact-checking?

Facebook's third-party fact-checking program was created in the wake of criticism over Facebook's role in the 2016 election. A former Facebook employee familiar with the process told Popular Information that, following the 2016 election, there was a proposal to create a fact-checking program inside Facebook. But ultimately, that proposal was shelved, the former employee said, over concerns that it would expose Facebook politically and leave it vulnerable to charges of bias.

The third-party fact-checking program was favored, according to the former Facebook employee, because it allowed Facebook to shield itself from criticism. Facebook emphasizes that it does not request any fact checks from its partners. Instead, each fact-checker makes its own decisions.

This may also explain why Facebook's funding of its third-party fact-checkers is so minimal. If Facebook accounted for the majority of funding for one of these entities, it could be viewed as responsible for its work-product. (Additionally, the entities themselves may not want their relationship with Facebook to comprise the bulk of their activities.)

Time is not on their side

On Facebook, information can spread very rapidly. In a matter of hours, a piece of content — true or not — can reach millions of people.

There were 302 fact checks of Facebook content in the U.S. conducted last month. But much of that work was conducted far too slowly to make a difference. For example, Politifact conducted 54 fact checks of Facebook content in January 2020. But just nine of those fact checks were conducted within 24 hours of the content being posted to Facebook. And less than half of the fact checks, 23, were conducted within a week.

Örsek told Popular Information that "fact-checkers should...prioritize working around the clock to provide faster response while maintaining the integrity of the fact check."

Facebook says that it accounts for this latency by downranking the content in its algorithmic queue. What does that mean? Facebook provides its fact-checking partners with a queue of information that it believes may be inaccurate based on an automated system. For example, a piece of content that attracts comments expressing disbelief — "I can't believe it!" — might be placed in the queue. While Facebook waits to see if a fact-checker will review the content, Facebook downranks it, meaning that it will show up less frequently in people's feeds.

According to the former Facebook employee, however, this process is problematic. It's difficult for an algorithm to figure out whether something is true. So the Facebook queue is full of false positives. That means a lot of accurate information is suppressed.

The Daily Caller factor

The Daily Caller, a right-wing publication founded by Fox News' Tucker Carlson, is one of Facebook's fact-checking partners. As Popular Information previously reported, The Daily Caller was added to the program at the behest of Facebook executive Joel Kaplan after conservatives claimed that publications like the Associated Press and Politifact were too liberal. Facebook added The Daily Caller even though the site has a history of inaccurate reporting. There are more reasons to be concerned.

The Daily Caller spun up a separate website, Check Your Fact, to publish its fact-checking work for Facebook. According to its 2019 application for accreditation by the International Fact-Checking Institute, Check Your Fact spends about $200,000 per year on this work.

Check Your Fact also disclosed that about half of its funding comes from "a $100,000 grant from the Searle Freedom Trust." The Searle Freedom Trust is a major funder of organizations that push climate disinformation and other right-wing causes. It is a funder, for example, of the C02 coalition, a group claims increased carbon dioxide emissions will benefit the earth.

So one of Facebook's fact-checking partners is funded by a group that seeks to push disinformation. To date, Check Your Fact's work on Facebook content has not been terrible. But, heading into the 2020 election, Facebook is giving The Daily Caller power to slow the distribution of any information on its platform. It does not inspire confidence.

CORRECTION (2/13): This story initially listed Facebook’s payments to Lead Stories in 2018. It has now been updated with Facebook’s 2019 payment.

Thanks for reading!

The only way to get Facebook to do the right thing is to send them a message that will effect them FINANCIALLY. I, along with several of my friends have shut down our accounts and I’m spreading the word. ‘We the People’ have power when we work together!

I closed my FB account years ago. I haven't missed it for a moment. With email, texts, IM, etc, unless someone runs a FB-connected business, I don't understand why anyone would remain on a platform that is actively undermining science, democracy, and reality itself.